How should self-driving cars handle potentially fatal accidents?

Turns out your answer depends a lot on whether you’re the car or the pedestrian.

Self driving cars sound awesome. Less traffic, fewer accidents, more free mental bandwidth while commuting. But nothing is perfect, and some scientists are beginning to examine how automated cars should handle accidents:

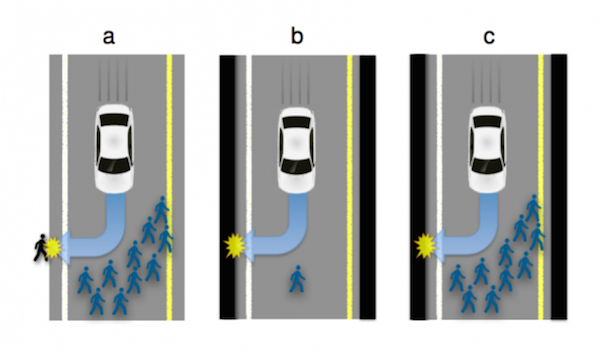

Here is the nature of the dilemma. Imagine that in the not-too-distant future, you own a self-driving car. One day, while you are driving along, an unfortunate set of events causes the car to head toward a crowd of 10 people crossing the road. It cannot stop in time but it can avoid killing 10 people by steering into a wall. However, this collision would kill you, the owner and occupant. What should it do?

One way to approach this kind of problem is to act in a way that minimizes the loss of life. By this way of thinking, killing one person is better than killing 10.

But that approach may have other consequences. If fewer people buy self-driving cars because they are programmed to sacrifice their owners, then more people are likely to die because ordinary cars are involved in so many more accidents. The result is a Catch-22 situation.

So one could abstractly argue all day about what’s right, and if you’re able to take yourself out of the equation, the math is what it is.

If it were up to you to decide how autonomous cars handle accidents, what do you program them to do?